The "Everything is a File" Tour of Linux

How I stopped fearing the terminal by deliberately breaking everything.

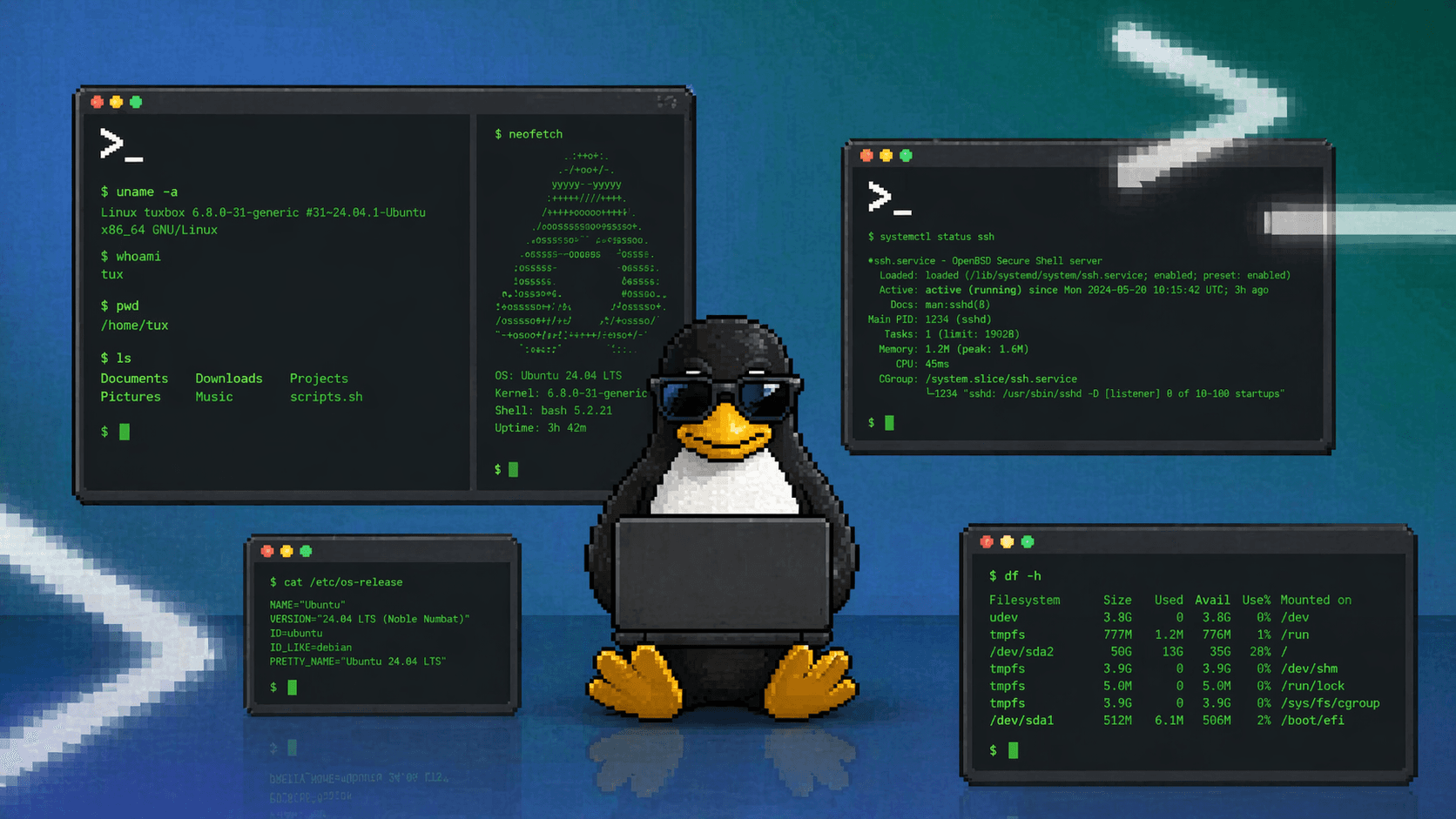

I wanted to overcome my command line phobia and join the 4% club of human bots who don't panic when they see the terminal, typing away in their plain terminal. I wanted to feel like a hacker. Not the movie kind with three keyboards and cascading green text, but the kind who actually understands what their computer is doing. So I googled "How to learn Linux" and landed on the most mundane, beige documentation website I have ever seen in my life. I dropped into forums next, expecting a more human approach. The first post I found had someone saying "just build your own kernel." I just wanted to learn things, not go on a spiritual journey.

People told me Linux had a learning curve. Except this curve was a cliff.

If you want a 12-minute brush through Linux basics before diving into the weird stuff, Fireship made a video for exactly that.

Before we go into a rabbit hole, I will suggest to try all of this on a VPS, a VM, or a Docker container. Not your main machine. The reason is simple: some of what I will show you involves deliberately breaking things. Try to understand the command before copying, because imagine copying rm -rf /. But if you are HIM, nobody is stopping you. On a throwaway container? You type exit, spin up a fresh one, and try again. Freedom to destroy without consequences is genuinely how you learn Linux fastest.

Two tools that will save you more than any tutorial

Before anything else: man and tldr. These are your cheat codes.

man is the built-in Linux manual. Every command, every system file, every config format has one. When you wonder what a flag does or what a file is for, man has the answer written by the people who built the thing.

man ls

man chmod

man 5 passwd # the 5 means file format manual, not the command

man resolv.conf # the config file itself has a man page

The problem with man is density. It was written by engineers for engineers. Enter tldr, a community-written alternative that gives you just the practical common cases.

apt install tldr

tldr ls

tldr chmod

tldr gives you five useful examples instead of fifty pages of specification. Use tldr when you want to quickly understand what something does. Use man when you need the full picture. If man is the textbook, tldr is the cheat sheet your classmate scribbled the night before the exam.

One more habit worth building early: when something fails, read the actual error message before googling. "Permission denied" means permissions. "No such file or directory" means the path is wrong or the file doesn't exist. "Command not found" means it is not installed or not in your PATH. Linux error messages are not trying to confuse you. They are telling you what happened.

What even is /etc

/etc is where Linux keeps its brain. Configuration for almost everything the system does lives here in plain text files you can open with any editor. I spent the first hour just running ls /etc and opening random files, and breaking things up. That felt like finding an unlocked door in a building I thought was sealed.

DNS redirection or how I broke Google with one line

The one thing I tried in /etc was /etc/hosts. I wanted to reach my local dev server by a name instead of 127.0.0.1:3000. Opening the file:

nano /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost

I added one line at the bottom and saved:

127.0.0.1 myapp.local

It worked immediately. No restart, no daemon reload. The file is read fresh on every single lookup because this check happens before Linux even thinks about asking a DNS server anything.

Then I had an idea. What if I pointed instagram.com at localhost?

nano /etc/hosts

# add: 127.0.0.1 instagram.com

ping instagram.com

PING instagram.com (127.0.0.1) 56 bytes of data.

This is poor man's parental control. All of Meta's infrastructure, the entire DNS system, bypassed by one line in a text file on your machine. The whole internet is the fallback. This file runs first.

Imagine making your friend slowly lose their mind on a shared Linux machine, redirect their most visited sites to 127.0.0.1 in /etc/hosts. They will restart their wifi three times, blame their ISP, factory reset their router, and spend an hour in a chat with customer support before anyone thinks to check a text file.

/etc/resolv.conf: the file that keeps rewriting itself

Once I understood /etc/hosts, I wanted to know what happened when a hostname was not found there. That fallback is /etc/resolv.conf, which holds the actual DNS server address the system asks.

cat /etc/resolv.conf

# This file is managed by man:systemd-resolved(8). Do not edit.

nameserver 127.0.0.53

I had edited this on my machine and my changes kept vanishing. Now I understood why. systemd-resolved is a local DNS middleman running at 127.0.0.53. It manages this file and rewrites it whenever it restarts.

Let's break the internet:

echo "nameserver 0.0.0.0" > /etc/resolv.conf

ping google.com

ping: google.com: Temporary failure in name resolution

The machine is still connected to the network. It just cannot resolve any names because it is asking a DNS server that does not exist. apt update fails, curl fails, package installs fail. The network is fine, only name resolution is dead. This is what a misconfigured DNS server looks like from the inside.

# Fix it immediately

echo "nameserver 8.8.8.8" > /etc/resolv.conf

The permanent fix without the file fighting back is editing systemd-resolved's own config:

nano /etc/systemd/resolved.conf

# under [Resolve]:

# DNS=1.1.1.1

# FallbackDNS=8.8.8.8

systemctl restart systemd-resolved

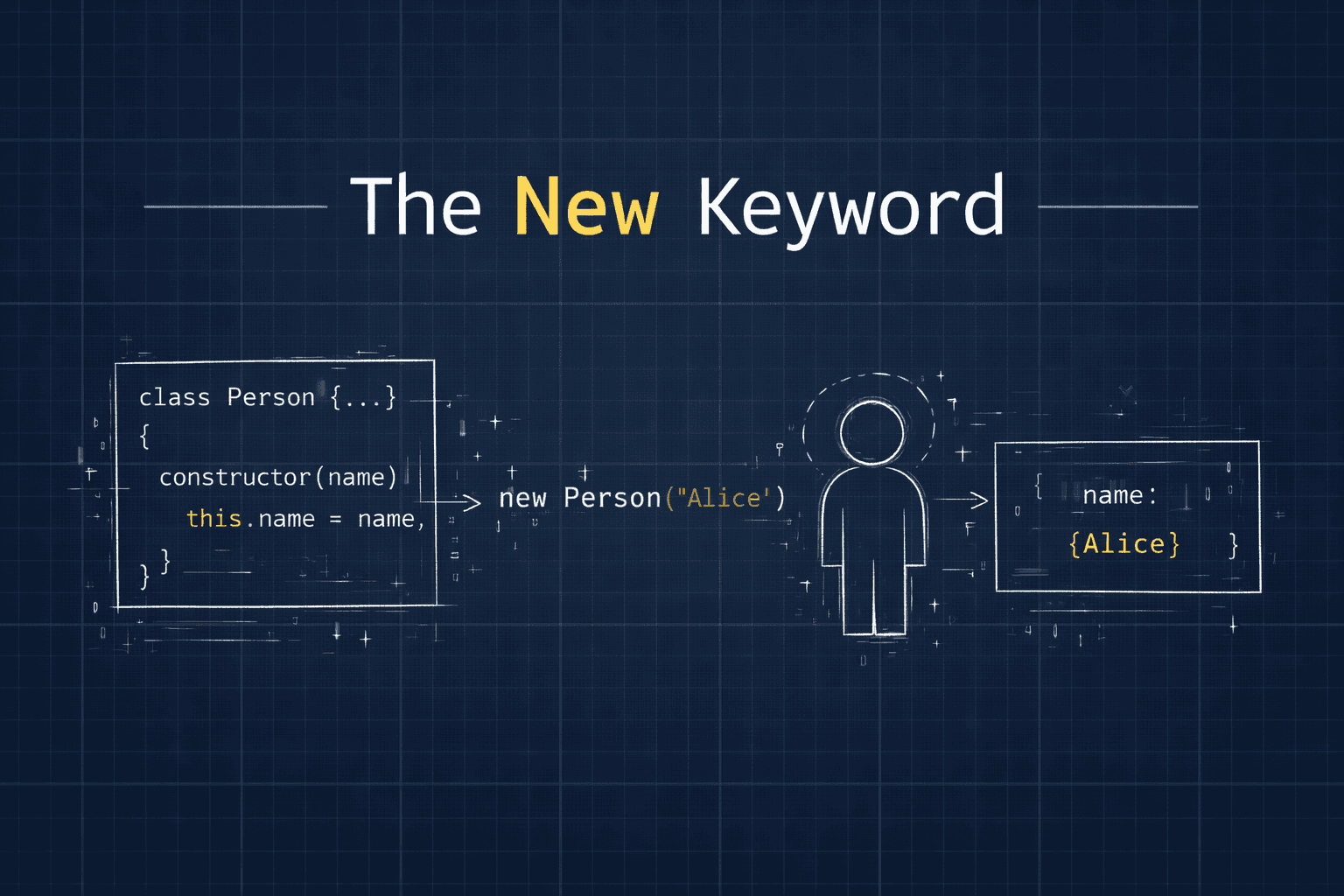

/etc/passwd and /etc/shadow: the user accounts are just a text file

I heard that everything in Linux is treated as a file, from hardware device to system configurations, just a file. This in itself was surprising to me, till I started exploring it through /etc/passwd

cat /etc/passwd

root:x:0:0:root:/root:/bin/bash

daemon:x:1:1:daemon:/usr/sbin:/usr/sbin/nologin

www-data:x:33:33:www-data:/var/www:/usr/sbin/nologin

saumya:x:1001:1001::/home/saumya:/bin/bash

# the above is in the form of name:password:UID:GID:GECOS:directory:shell (root:x:0:0:root:/root:/bin/bash)

Seven colon-separated fields per line: username, password placeholder, user ID, group ID, comment, home directory, login shell. The x in the second field means the actual password hash is stored in /etc/shadow, which only root can read.

sudo cat /etc/shadow | grep saumya

saumya:\(6\)rounds=4096\(saltstring\)hashedpassword:19820:0:99999:7:::

# 9 fields separated by ':'

The 9 fields are username, encrypted password, last change, minimum age, maximum age, warning, activity, expiration and reserved.

\(6\) means SHA-512. The rounds=4096 deliberately makes hashing slow. Slow hashing means brute-force attacks take longer. Your password is protected partly by intentional computational expense.

Now the interesting part. Every account with /usr/sbin/nologin as its login shell cannot open an interactive session. Try to SSH in as www-data and the connection closes immediately. No password prompt, no shell, nothing. These accounts exist only so the processes running as them have a numeric user identity for permissions. They are not meant for humans to log into.

Now, i wanted to try breaking it and hence, changed my login shell to /bin/false in /etc/passwd.

nano /etc/passwd

# find your line, change /bin/bash to /bin/false

When i tried to connect in a new terminal, the session closes the instant the shell starts because /bin/false exits immediately with a failure code. My account exists, password is correct, the SSH connection succeeds, but I am immediately disconnected. I have locked yourself out without deleting the account or changing the password.

I fixed the current session by changing /bin/false back to /bin/bash.

/etc/ssh/sshd_config: watching bots try your front door

On any internet-facing VPS, run this after it has been up for a day:

grep "Failed password" /var/log/auth.log | wc -l

On a fresh VPS after few hours, that number is usually in the thousands. Automated bots scan every IP address on the internet looking for open port 22 and trying common username and password combinations. root, admin, ubuntu, 123456. Constantly, from everywhere.

All SSH behavior is controlled by /etc/ssh/sshd_config:

nano /etc/ssh/sshd_config

You would see a file with multiple lines with Port info, permits and authentications. Two lines that matter immediately:

PasswordAuthentication no

PermitRootLogin no

Setting PasswordAuthentication no means SSH only accepts key-based authentication. The bots still knock but there is nothing to brute-force. No password means no attack surface. PermitRootLogin no means even with valid root credentials, nobody SSHs directly into root.

Want to watch the bots in real time:

tail -f /var/log/auth.log

Run that for five minutes on a fresh VPS. The attempts come in constantly from IP addresses across dozens of countries. It is mildly unsettling and also clarifying. This is just what the internet is like all the time.

After disabling passwords:

systemctl restart sshd

grep "Failed password" /var/log/auth.log | tail -5

# bots still try, still fail, but now they fail faster

Whenever the bots try a password, the server tells them 'NO', not even letting them trying the password to authenticate.

/etc/fstab: the file that can stop your machine from booting

/etc/fstab is read at boot. Linux mounts everything listed here. USB drives, additional partitions, network shares. Get an entry wrong and the machine may not boot.

cat /etc/fstab

# in the order

# file system, mount point, type, options, dump and pass

UUID=abc123 / ext4 defaults 0 1

UUID=def456 /boot ext4 defaults 0 2

tmpfs /tmp tmpfs defaults 0 0

UUID is used instead of the device name because the name might change but the UUID won't. This acts as the Aadhar Number of the device.

The experiment:

nano /etc/fstab

# add at the bottom:

broken-entry-here

'broken-entry-here' is a string of text without the columns. When the mount command encounters it, it has no idea what the device is, and where it should be mounted.

Now test without rebooting:

mount -a

This command is essentially the dry run of the boot process.

mount: /: can't find broken-entry-here.

Error. Exactly what you would get on boot if you saved this and restarted. Fix it by removing or commenting out the broken line, then run mount -a again to confirm it passes.

The rule: always test with mount -a before rebooting when you touch /etc/fstab. Always. A bad fstab entry drops you into recovery mode or an emergency shell with no obvious explanation. Keep a mental note that this file exists and kills boots if you get it wrong.

/proc: the folder that is not actually on disk

/proc is a virtual filesystem. There are no files here stored on disk anywhere. When you read something from /proc, the kernel generates the content on the spot from its own live internal data. It is a window into running system state, not stored data.

cat /proc/meminfo

Run it twice, ten seconds apart. The numbers change. Because it is live.

cat /proc/cpuinfo | grep "flags" | head -1

The flags like vmx, aes and sse which details the CPU capability the processor supports. vmx means hardware virtualization is available. If you try to run KVM and it refuses, check for this flag. aes means the CPU has built-in AES hardware acceleration. Disk encryption and TLS are genuinely faster because of this single flag. sse/avx means CPU has Streaming SIMD Extensions which can boost performance for heavy computations, and video encoding.

The per-process data is where things get interesting. Every running process gets its own directory:

# Find something running

ps aux | grep nginx

# Say it's PID 1234

ls /proc/1234/

cmdline cwd environ exe fd maps mem net status

fd/ lists every file descriptor the process has open right now. Log files, sockets, pipes, all of it as symlinks. environ has the exact environment variables the process launched with. cmdline shows the full command that started it. status has memory use, current state, which user it runs as.

cat /proc/1234/cmdline | tr '\0' ' '

ls -la /proc/1234/fd/

The kernel stores command arguments separated by null bytes (\0) to avoid confusion with spaces inside arguments. tr swaps those null spaces to make it readable.

When you delete a file while a process holds it open, the data stays on disk. The file is gone from ls but the space is allocated until the process releases its file descriptor. In Linux, file is a link to a block of data, you are only removing the name. If the process has file descriptor open, data block will be kept alive. By redirecting to the FD in /proc, you're telling the kernel to wipe the actual data blocks while leaving the 'ghost' handle intact.

Find these with lsof | grep deleted. Fix without restarting the process:

> /proc/1234/fd/4

That truncates the held-open file to zero bytes through the process's own descriptor. Disk space reclaimed, process uninterrupted.

Routing: where packets actually go

The routing table is what your machine consults before sending any packet. It decides which network interface and which gateway to use.

ip route show

default via 192.168.1.1 dev eth0 proto dhcp src 192.168.1.100 metric 100

# local lane

192.168.1.0/24 dev eth0 proto kernel scope link src 192.168.1.100

The default line handles everything. It says "for any destination I don't have a specific rule for, send it to 192.168.1.1." That is your router. Every internet-bound packet passes through that rule.

Delete it and watch what happens:

ip route del default

ping google.com

connect: Network is unreachable

The machine is still on the network. It just cannot reach anything outside the local subnet because there is no default route. This is one of the first things to check when a server cannot reach the internet. The default route may simply not exist, perhaps because a VPN misconfigured it, or because someone ran ip route flush without knowing what it did.

ip route add default via 192.168.1.1

# put it back

# Most useful debugging command for routing

ip route get 8.8.8.8

8.8.8.8 via 192.168.1.1 dev eth0 src 192.168.1.100

One line is the complete answer about which interface and gateway any packet would use to reach any destination.

/dev: the devices that are not devices

/dev/null, /dev/zero, /dev/random all live in the same directory as actual hardware. They have the same character device type marker. None of them are physical hardware.

/dev/null is a black hole. Write anything to it, and it is gone silently. Read from it, you get immediate end-of-file. This is what command > /dev/null 2>&1 does: stdout goes to the black hole, 2>&1 sends stderr to wherever stdout is going (also the black hole).

The numbers represent 'streams.' 1 is standard output (normal text), and 2 is standard error. 2>&1 means, take all the errors and send them into the same pipe where the normal text is going. Since that pipe leads to /dev/null, the command becomes perfectly silent.

# Silence all output but still check if it worked

some_noisy_command > /dev/null 2>&1

echo $? # 0 means success, anything else means it failed

/dev/zero produces infinite zero bytes. Good for filling disks or creating blank test files:

fallocate -l 500M bigfile # faster for large files

# or the /dev/zero version:

dd if=/dev/zero of=bigfile bs=1M count=500

df -h # watch disk fill up

rm bigfile

fallocate is instant because it just tells the filesystem to reserve the space without actually writing anything to the disk. dd if=/dev/zero forces the CPU to actually churn out millions of zeros and write them one by one.

/dev/urandom produces cryptographically random bytes collected from hardware entropy:

cat /dev/urandom | tr -dc 'a-zA-Z0-9' | head -c 24

echo

# or the cleaner version:

openssl rand -base64 18

The thing that made this concrete for me: the same read() system call your code uses to open a text file reads random bytes from /dev/urandom. Same interface but completely different behavior underneath. That is the "everything is a file" idea became real.

/boot: where the kernel lives

ls /boot

vmlinuz-5.15.0-91-generic

initrd.img-5.15.0-91-generic

grub/

config-5.15.0-91-generic

vmlinuz is the compressed Linux kernel (vm = virtual machine, z- compressed like .zip). The actual operating system, stored as a file. initrd.img is a temporary RAM filesystem that acts as a bridge, which loads before the real filesystem mounts at boot. grub/ has the bootloader that lets you choose between operating systems.

cat /boot/config-$(uname -r) | grep CONFIG_EXT4

CONFIG_EXT4_FS=y

That file is the kernel build configuration. Every driver, every feature, listed. Most of it is cryptic without context but searching it teaches you what the kernel actually supports.

The only advice here: do not delete anything from /boot. Deleting vmlinuz means the computer cannot boot at all. Recovery requires a live USB. This is the one folder in the filesystem where curiosity should not turn into experiments.

Consider

/etcis the brain and/procis the nervous system,/bootis the heart of Linux.

/var/log: the system's diary

/var/log is where the machine writes what happened. Everything.

ls /var/log

auth.log syslog kern.log dpkg.log

nginx/ apt/ journal/

auth.log records every login, failed or successful. syslog is the general system log. kern.log has kernel messages. dpkg.log records every package installed or removed and when.

Some logs are plain text files you can cat like syslog, while others live in the journal/ directory stores logs in a binary format to make them searchable and faster to filter. The journalctl command is used to read these binary logs.

tail -f /var/log/syslog # live system events

journalctl -u nginx -f # live logs for a specific service

journalctl -xe # recent journal with explanations

journalctl --since "1 hour ago" # last hour of everything

When something breaks, look for the log file for the specific thing that broke. It almost always contains the exact error. If logs kept growing forever, they’d fill your entire disk. Linux uses a tool called logrotate that periodically takes the old log, compresses it in the .gz files, and eventually deletes it

/etc/systemd/system/ writing a service that survives crashes

Systemd starts everything on boot and manages services. Unit files in /etc/systemd/system/ tell it what to run.

I wanted a script running on boot that restarted if it crashed. Cron can run on boot with @reboot but has no crash recovery. A service file does:

nano /etc/systemd/system/myapp.service

[Unit]

Description=My app

After=network.target

[Service]

Type=simple

User=saumya

WorkingDirectory=/home/saumya/app

ExecStart=/usr/bin/node server.js

Restart=on-failure

RestartSec=5

StandardOutput=journal

StandardError=journal

[Install]

WantedBy=multi-user.target

After=network.target line is a safety check that tells Linux to not start this app until the network is actually up.

By default, system services want to run as root and can be a security risk. If the app gets hacked, User= makes sure the attacker is trapped in the user account and doesn't get access to keys. WorkingDirectory ensures that if the code looks for a file in ./config, it's looking in the right folder.

systemctl daemon-reload

systemctl enable myapp

systemctl start myapp

systemctl status myapp

Restart=on-failure is the important line. The process crashes, it comes back in 5 seconds because of RestartSec=5 which can be adjusted. You stop it with systemctl stop. The distinction between a crash and an intentional stop is handled automatically.

Logs for this service:

journalctl -u myapp -f

PATH why commands vanish when you break one variable

PATH tells the shell where to look for executables. When you type ls, the shell searches every directory in PATH until it finds a file named ls. If PATH is wrong, every command fails.

export PATH=""

ls

bash: ls: command not found

Everything is gone. The binaries still exist on disk but the shell just lost its map. It can be fixed with:

export PATH=/usr/bin:/bin:/usr/sbin:/sbin

ls # back

Why this matters beyond the experiment: cron jobs run with a stripped PATH by default. If your script calls something in /usr/local/bin and cron's PATH does not include that directory, the cron job silently fails with "command not found" while the exact same script works fine in your terminal. Set PATH explicitly in your crontab:

PATH=/usr/local/bin:/usr/bin:/bin

* * * * * /home/saumya/myscript.sh

If you’re ever unsure where a command is actually coming from, use which. Running which ls shows the exact path the shell found. It also helps to verify if the map is working.

Permissions

Permissions in theory is chmod, rwx, octal numbers. In practice I kept getting them wrong until I broke something on purpose.

echo '#!/bin/bash

echo "this ran"' > myscript.sh

./myscript.sh

bash: ./myscript.sh: Permission denied

The file exists and the content is correct, but newly created files have no execute permission. Check with:

ls -la myscript.sh

# -rw-r--r-- 1 saumya saumya 30 myscript.sh

No x anywhere. Add it:

chmod +x myscript.sh

./myscript.sh

# this ran

Now the more interesting break. Remove execute from ls itself:

chmod -x /bin/ls

ls

bash: /bin/ls: Permission denied

ls still exists on disk. It just cannot be executed. From the shell's perspective it might as well not be there. Fix:

chmod +x /bin/ls

The three positions: owner, group, others mean the same permissions behave differently depending on who runs the command. When your web server returns 403 errors and logs say "permission denied," this is why. The files exist, the server is running, but www-data does not have the permission to read them.

chown -R www-data:www-data /var/www/html

chmod -R 755 /var/www/html

For a file: +x means you can run it; But for a directory: +x means you can view it using cd.

Linux works on Principle of Least Privilege(PoLP), hence avoid the temptation to run chmod 777.

Environment variables

A friend set a variable in ~/.bashrc. It worked in his terminal. His systemd service couldn't see it which is followed by hours of debugging.

Every process inherits environment variables from its parent. The terminal is started by the login process, which sources ~/.bashrc, which sets your variables. Systemd (PID 1) starts services. Systemd never read your ~/.bashrc. The variables your terminal has, the service has never heard of.

Your terminal reads ~/.bashrc and /etc/profile and has everything you configured. A cron job reads almost nothing. PATH is /usr/bin:/bin. Your tools in /usr/local/bin are invisible. A systemd service reads only what you explicitly declare in its unit file under [Service].

For services, declare variables in the unit file:

[Service]

Environment=NODE_ENV=production

EnvironmentFile=/etc/myapp/.env

For cron, set PATH at the top of the crontab or use absolute paths everywhere. For your own sessions, ~/.bashrc works. The confusion only happens when you expect one environment to be visible in another. In Linux, explicit is better than implicit. If a service needs a variable, tell the service directly.

Terminal shortcuts worth memorizing now

Ctrl+R searches command history interactively. Start typing any part of a command you ran before. This is faster than retyping long paths.

cd - goes to the last directory you were in. Jump between two locations without retyping either path.

!! repeats the last command. The classic use is sudo !! when you forgot sudo.

Ctrl+A and Ctrl+E jump to the start and end of the current line. Ctrl+W deletes the last word backwards. These three reduce terminal frustration noticeably.

When a config file edit breaks something and you want to know exactly what changed: diff /etc/nginx/nginx.conf.backup /etc/nginx/nginx.conf. Keep backups before editing important files. cp nginx.conf nginx.conf.bak takes two seconds.

I found two resources that can be helpful in the learning curve of Linux:

Linux Journey : This has organized learning path that breaks down the complexities of the Linux operating system into digestible stages.

Bandit : It is wargame designed to teach the fundamentals of the Linux command line. Visually looks boring, but is really interesting to follow through.

References

Fireship: Linux in 100 Seconds : start here if you haven't

Linux man pages : every file and command has one

tldr pages : install with

apt install tldr, use it for every new commandArch Wiki : the best Linux documentation on the internet, works for all distros regardless of what you're running

explainshell.com : paste any command and it breaks down every flag visually

The filesystem docs : official kernel documentation