MongoDB is Great. Mongoose Makes it Better.

Mastering the tools that bring structure, validation, and advanced analytics to your JavaScript backend.

When I first started learning backend development, the database question came up almost immediately. Everyone has an opinion. SQL people will tell you it is the only correct way to store data. NoSQL people will tell you SQL is legacy thinking. I got confused fast, and honestly I am glad to have started with MongoDB.

MongoDB is not always the right choice, but for a JavaScript developer learning backend for the first time, the mental model clicks in a way that SQL tables do not. Your data looks like objects. Your queries feel like JavaScript. You do not have to learn an entirely separate language just to get something saved and retrieved. You write code that looks like this:

const user = {

name: "Abc",

email: "abc@example.com",

age: 28

}

And that is also, more or less, exactly what gets stored. There is no translation layer in your head where you have to think "okay, this object maps to these columns in this table with these foreign keys." It is just data, shaped the way you already think about data in JavaScript.

That familiarity is MongoDB's biggest advantage when you are starting out. It gets out of your way.

What is MongoDB

MongoDB is a database, but it stores data differently from databases like MySQL or PostgreSQL. Those are relational databases that store data in tables with rows and columns, like a spreadsheet. MongoDB is a document database. It stores data as documents, which are essentially JSON objects, and groups them into collections instead of tables.

So instead of a users table where every row has the same columns, you have a users collection where each document can have a slightly different shape. One user might have a phone field, another might not. One might have a nested address object, another might not. MongoDB simply save the data into the fields as it receives.

Each document automatically gets a unique _id field. MongoDB generates this as an ObjectId, unique across the entire database. _id is used to fetch specific documents.

The flexibility is real when your application is young and you are still figuring out what data you actually need. You can add a field to some documents without running a migration script. You can change your mind about the shape of your data without a painful database alteration. When you are building version one of something and requirements are shifting every week, this matters.

That said, the flexibility has a cost. Nothing stops two developers on your team from storing the same concept under different field names. Nothing stops your code from saving a string where a number was expected. MongoDB will happily store anything, and you find out something was wrong not when you save the data, but when you try to read it three weeks later and it comes back in an unexpected shape.

This problem gets solved by Mongoose.

Why you cannot just use MongoDB directly in your Node app

Technically, you can. MongoDB has an official Node.js driver that lets you connect and run queries without any extra library. But the raw driver gives you back plain JavaScript objects with no type information. Your editor cannot autocomplete field names. If you make a typo, nothing warns you. If someone saves { eMail: "..." } instead of { email: "..." }, the database accepts it without complaint.

For a small personal project, that is probably fine. For anything with more than one developer, or anything you want to maintain six months from now, you start wanting structure.

Mongoose is a library that sits between your Node.js code and MongoDB. It is often called an ODM (Object Document Mapper), which is the document database equivalent of an ORM. You define the shape of your data in code, and the library makes sure anything going to the database matches that shape. You get schemas, validation, methods on documents, hooks that run before or after operations, and a proper query API.

Setting up the connection

Before any schema work, you connect. Mongoose wraps MongoDB's connection handling so you do it once and every model you define automatically uses that connection.

// db.js

import mongoose from 'mongoose';

async function connectDB() {

try {

await mongoose.connect(process.env.MONGO_URI);

console.log('Connected to MongoDB');

} catch (err) {

console.error('MongoDB connection failed:', err);

process.exit(1);

}

}

export default connectDB;

// server.js

import 'dotenv/config';

import connectDB from './db.js';

async function startServer(){

await connectDB();

}

The connection string points to your MongoDB instance and names the database. For a local setup it looks like mongodb://localhost:27017/foodapp. For MongoDB Atlas, it comes from the dashboard and works identically. Always store it in a .env file. Hardcoding it is fine while learning but you should not commit credentials to Git.

Writing a schema

A Mongoose schema is where you tell your application what a document is supposed to look like. You create one with new mongoose.Schema({}), passing an object where each key is a field name and the value describes the expected type.

We will build a food delivery app through this post. The user schema starts simple:

// user/user.model.js

import { Schema, model } from 'mongoose';

const userSchema = new Schema({

name: String,

email: String,

phone: String,

age: Number,

role: String,

isActive: Boolean,

});

export const User = model('User', userSchema);

The model() call is what turns a schema into something you can actually use. The name you pass, 'User', determines the MongoDB collection name. Mongoose lowercases and pluralizes it automatically, so documents land in a collection called users.

Using the model looks exactly like working with a class:

import { User } from './models/User.js';

async function createUser() {

const user = new User({

name: 'John Doe',

email: 'john@example.com',

phone: '9999999999',

age: 25,

});

await user.save();

console.log(user._id); // MongoDB auto-generates a unique ID

}

createUser();

This works, but the schema above does not enforce much. You can save a user with no email, an age of -500, or a role called 'supervillain'. Mongoose lets it all through because you only told it the type, not the rules. For that, you need constraints.

Adding constraints

Constraints are what make a schema actually useful. Instead of just passing a type, you pass an object with the type and whatever rules you want enforced.

const userSchema = new Schema({

name: {

type: String,

required: [true, 'Name is required'],

trim: true,

minlength: [2, 'Name must be at least 2 characters'],

maxlength: [60, 'Name cannot exceed 60 characters'],

},

email: {

type: String,

required: [true, 'Email is required'],

unique: true,

lowercase: true,

trim: true,

match: [/^\S+@\S+\.\S+$/, 'Please enter a valid email'],

},

phone: {

type: String,

match: [/^[6-9]\d{9}$/, 'Enter a valid 10-digit mobile number'], // Regex for Indian phone no.

},

age: {

type: Number,

min: [16, 'Must be at least 16 to order'],

max: [120, 'Please enter a valid age'],

},

role: {

type: String,

enum: ['customer', 'rider', 'admin'],

default: 'customer',

},

isActive: {

type: Boolean,

default: true,

},

}, {

timestamps: true,

});

The timestamps: true option automatically adds createdAt and updatedAt fields to every document and keeps updatedAt current on every save, without you having to remember to do it manually.

When validation fails, Mongoose throws a ValidationError before the document reaches MongoDB. The error includes your custom message, so you can send it directly back to the user:

try {

const bad = new User({ name: 'A', email: 'notanemail' });

await bad.save();

} catch (err) {

console.log(err.errors.name.message);

// "Name must be at least 2 characters"

console.log(err.errors.email.message);

// "Please enter a valid email"

}

One thing worth knowing about unique: true: it creates a database level index in MongoDB rather than running a Mongoose check. The error looks different, instead of a ValidationError you get an error with err.code === 11000.

Sometimes the built-in options are not enough. You can pass a custom validator function for any field:

deliveryPincode: {

type: String,

validate: {

validator: function(v) {

return /^\d{6}$/.test(v);

},

message: (props) => `${props.value} is not a valid pincode`,

},

},

Nested objects and references

Data in real applications is never straightforward. A user has an address. An order has multiple items. Mongoose gives you two ways to handle this: embed the related data directly inside the document, or store a reference to another document by its _id and look it up separately.

For an address, embedding makes sense. You would never want to fetch an address without the user it belongs to, and an address has no meaning outside of that context. The schema looks like a nested object:

address: {

street: { type: String, trim: true },

city: { type: String, trim: true },

pincode: {

type: String,

match: [/^\d{6}$/, 'Invalid pincode'],

},

},

You read it by chaining dots: user.address.city.

For an order that belongs to a user, a reference makes more sense. A user exists independently and appears in many places. You store the user's _id in the order:

// models/order.model.js

const orderSchema = new Schema({

user: {

type: mongoose.Schema.Types.ObjectId,

ref: 'User',

required: true,

},

restaurant: {

type: mongoose.Schema.Types.ObjectId,

ref: 'Restaurant',

required: true,

},

items: [{

name: { type: String, required: true },

quantity: { type: Number, min: 1, default: 1 },

price: { type: Number, required: true, min: 0 },

}],

totalAmount: { type: Number, required: true },

status: {

type: String,

enum: ['placed', 'confirmed', 'out_for_delivery', 'delivered', 'cancelled'],

default: 'placed',

},

}, { timestamps: true });

The ref: 'User' tells Mongoose which model to look up when you call .populate():

const order = await Order

.findById(orderId)

.populate('user', 'name email')

.populate('restaurant', 'name address');

console.log(order.user.name); // Prints the name of the user of the particular id

Without populate(), order.user is just an ID string. With it, Mongoose runs the extra lookup and replaces the ID with the actual document. The rule for choosing is simpler than it sounds: if the data only makes sense alongside its parent and will always be read together with it, embed it. If the data exists on its own and gets used from multiple places, reference it.

Virtual fields

A virtual is a field that does not get saved to the database. It is computed from other fields whenever you read the document. For the food delivery app, a displayAddress virtual combines the separate address fields into one readable string:

userSchema.virtual('displayAddress').get(function() {

if (!this.address?.city) return 'No address saved';

return `\({this.address.street}, \){this.address.city} - ${this.address.pincode}`;

});

const user = await User.findOne({ email: 'john@example.com' });

console.log(user.displayAddress);

// example:"Civil Lines, Delhi - 110054"

Virtuals do not appear in JSON.stringify() output by default, which means they will not show up in API responses unless you enable them. Add toJSON: { virtuals: true } to the schema options:

const userSchema = new Schema({ /* fields */ }, {

timestamps: true,

toJSON: { virtuals: true },

toObject: { virtuals: true },

});

Use regular functions, not arrow functions, for virtuals and hooks. Arrow functions do not have their own this, so this.address inside an arrow function refers to nothing useful. Mongoose passes the document as this, and a regular function is what receives it correctly.

Hooks

A hook is a function that runs automatically before or after a database operation.

The most important hook in almost every app is hashing a password before saving it. You should never store plain text passwords in a database:

import bcrypt from 'bcryptjs';

// Mongoose handles the promise through async; hence next() is not required

// if async absent, use next()

userSchema.pre('save', async function() {

if (!this.isModified('password')) return;

this.password = await bcrypt.hash(this.password, 12);

});

// 12 is Cost Factor; Number of iterations for hashing

The isModified('password') check is necessary. Without it, every time you update any field on a user, the hook would rehash an already hashed password. Checking isModified means the hook only runs when the password field actually changed.

Post hooks run after the operation completes:

// send welcome email to new users

userSchema.post('save', async function (doc) {

if (doc._wasNew) {

try {

await sendWelcomeEmail(doc.email, doc.name);

} catch (err) {

console.error("Email failed to send:", err);

}

}

});

There is a gotcha with update operations. When you use findByIdAndUpdate(), the pre('save') hook does not fire. Mongoose treats updates differently from saves. If you want validation to run on updates too:

userSchema.pre('findOneAndUpdate', function() {

this.setOptions({ runValidators: true });

});

Instance methods and static methods

Instance methods run on individual documents. Static methods run on the model class itself.

The most common instance method is password comparison for login:

userSchema.methods.comparePassword = async function(plainTextPassword) {

return bcrypt.compare(plainTextPassword, this.password);

};

// in your login route:

const user = await User.findOne({ email: req.body.email }).select('+password');

const match = await user.comparePassword(req.body.password);

if (!match) return res.status(401).json({ error: 'Wrong password' });

Use .select('+password') when you mark a field with select: false in the schema, Mongoose leaves it out of all query results by default. The +password syntax opts it back in for this one query where you actually need it.

Static methods are good for reusable query logic that belongs on the model itself:

userSchema.statics.findActiveRiders = async function(city) {

return this.find({

role: 'rider',

isActive: true,

'address.city': city,

});

};

const riders = await User.findActiveRiders('Mumbai');

Querying

Mongoose wraps MongoDB's query system in a clean API. These operations cover almost everything you will need day to day:

// all customers

const users = await User.find({ role: 'customer' });

// find one by email

const user = await User.findOne({ email: 'john@example.com' });

// find by MongoDB _id

const user = await User.findById(userId);

// update and get back the updated version

const updated = await User.findByIdAndUpdate(

userId,

{ isActive: false },

{ new: true } // this returns the document after change

);

// delete

await User.findByIdAndDelete(userId);

// pick specific fields, sort, paginate

const page2 = await User

.find({ role: 'customer' })

.select('name email')

.sort({ createdAt: -1 })

.skip(10)

.limit(10);

.select() is worth understanding early. Controlling which fields come back is how you avoid accidentally sending password hashes to the frontend.

Indexes

Indexes were one concept that took me a while to wrap my head around. Without indexes, MongoDB reads every document in a collection one by one to find a match. That is fine for a hundred documents. At a hundred thousand, a query that took 2ms starts taking seconds. The culprit is almost always a missing index.

An index is a separate, sorted data structure that MongoDB maintains alongside your collection. When you query a field that has an index, MongoDB looks up the value in that structure instead of scanning every document. The difference in speed can be several orders of magnitude.

You add indexes inside the schema in Mongoose. unique: true on the email field already creates one automatically. For other fields, you either add index: true on the field definition or call schema.index() directly:

// option 1: inline on the field

email: { type: String, unique: true }, // index created automatically

role: { type: String, index: true }, // single-field index

// option 2: schema.index() for more control

userSchema.index({ role: 1, isActive: 1 }); // compound index

userSchema.index({ 'address.city': 1 }); // index on a nested field

userSchema.index({ createdAt: -1 }); // -1 = descending order

The 1 means ascending order, -1 means descending. For most lookups the direction does not matter much, but for sorted queries it can. A compound index covers queries that filter by multiple fields together. If your app frequently fetches active riders in a specific city, an index on { role: 1, isActive: 1, 'address.city': 1 } will serve that query far faster than three separate single-field indexes.

There is a tradeoff. Indexes speed up find() but slightly slow down save(), because MongoDB has to update every index whenever a document changes. For most applications this is completely fine. The read speedup far outweighs the write cost. But indexing every field by default is not a good habit. Add indexes for fields you actually filter or sort by. Also, if you're adding an index to a live database with millions of records, do it during off-peak hours. Building an index on a massive active collection can lock the collection or spike CPU usage, leading to a temporary slowdown for your users.

A special case worth knowing is the TTL (Time To Live) index. It automatically deletes documents after a certain amount of time. This is perfect for email verification tokens, password reset links, or session records that should expire on their own:

const tokenSchema = new Schema({

userId: { type: mongoose.Schema.Types.ObjectId, required: true },

token: { type: String, required: true },

createdAt: { type: Date, default: Date.now },

});

// MongoDB automatically deletes these documents 1 hour after createdAt

tokenSchema.index({ createdAt: 1 }, { expireAfterSeconds: 3600 });

MongoDB handles the deletion automatically without a cleanup script.

Error handling

MongoDB and Mongoose throw different types of errors, and you need to handle them differently. Bundling all errors into a single catch block and returning a 500 response is a pattern that makes debugging very frustrating.

There are three categories you will encounter regularly. The first is a ValidationError from Mongoose, which fires when a document fails schema constraint checks. The second is error code 11000 from MongoDB itself, which fires when a unique constraint is violated at the database level. The third is a CastError, which fires when you pass something to findById() that is not a valid MongoDB ObjectId format.

// a reusable error handler you can drop into any project

function handleMongooseError(err) {

// validation failed before reaching the database

if (err.name === 'ValidationError') {

const messages = Object.values(err.errors).map(e => e.message);

return { status: 400, message: messages.join(', ') };

}

// duplicate key: unique constraint violated

if (err.code === 11000) {

const field = Object.keys(err.keyValue)[0];

return { status: 409, message: `${field} already exists` };

}

// invalid ObjectId format passed to findById or similar

if (err.name === 'CastError') {

return { status: 400, message: 'Invalid ID format' };

}

return { status: 500, message: 'An unexpected error occurred on the server.' };

}

If your route receives an id parameter from a URL and that parameter is not a valid ObjectId, calling User.findById(id) throws before even hitting the database. Without handling it, that becomes an unhandled exception. With it, you return a clean 400 response.

Using this in a route looks like:

app.post('/users', async (req, res) => {

try {

const user = await User.create(req.body);

res.status(201).json(user);

} catch (err) {

const { status, message } = handleMongooseError(err);

res.status(status).json({ error: message });

}

});

Aggregation pipelines

Once your data is in MongoDB and you understand basic querying, you will eventually need to answer questions like: what is the total revenue per restaurant this month, how many orders has each rider completed, what is the average order value by city. Basic find() queries cannot answer these. Aggregation pipelines can.

An aggregation pipeline is a sequence of stages that transform documents. Each stage takes documents in, does something to them, and passes the result to the next stage. Think of it like an assembly line for your data.

const revenueByRestaurant = await Order.aggregate([

// stage 1: only delivered orders from this month

{

$match: {

status: 'delivered',

createdAt: { $gte: new Date('2024-12-01') },

}

},

// stage 2: group by restaurant and sum totals

{

$group: {

_id: '$restaurant',

totalRevenue: { \(sum: '\)totalAmount' },

orderCount: { $sum: 1 },

}

},

// stage 3: sort by revenue, highest first

{

$sort: { totalRevenue: -1 }

},

// stage 4: join with restaurants to get the name

{

$lookup: {

from: 'restaurants',

localField: '_id',

foreignField: '_id',

as: 'restaurantInfo',

}

},

// stage 5: reshape the output

{

$project: {

restaurantName: { \(arrayElemAt: ['\)restaurantInfo.name', 0] },

totalRevenue: 1,

orderCount: 1,

}

}

]);

\(match is like a find() filter. Put it as early as possible in the pipeline so you are working with fewer documents in the stages that follow. \)group is how you aggregate: you specify a _id to group by and then use accumulator operators like \(sum, \)avg, \(min, and \)max to compute values. \(lookup is MongoDB's join equivalent. It pulls in documents from another collection and attaches them to the current documents. \)project controls which fields appear in the output, similar to .select() on a regular query.

These four stages cover the vast majority of reporting and analytics work you will do in a real application.

Transactions

By default, a MongoDB write either succeeds or fails on its own. That is fine most of the time. But sometimes you have an operation that involves multiple writes that must all succeed together or all fail together. Charging a user for an order and creating the order record is the classic example. If the charge succeeds but the order creation fails, the user paid for something that does not exist. That is a bad place to be.

Mongoose exposes them through sessions:

const session = await mongoose.startSession();

try {

session.startTransaction();

// both of these run inside the same transaction

const order = await Order.create([{

user: userId,

restaurant: restaurantId,

items: cartItems,

totalAmount: total,

status: 'placed',

}], { session });

await User.findByIdAndUpdate(

userId,

{ $inc: { orderCount: 1 } },

{ session }

);

await session.commitTransaction();

return order[0];

} catch (err) {

// if anything fails, roll back both writes

await session.abortTransaction();

throw err;

} finally {

session.endSession();

}

The key is passing { session } to every operation inside the transaction. Without it, that operation runs outside the transaction and will not be rolled back if something fails. The finally block ensures the session is always closed, even when an error is thrown.

The complete schema

Pulling it all together, here is the full User model with constraints, a nested address, a virtual, the password hook, the login method, and indexes:

// models/user.model.js

import { Schema, model } from 'mongoose';

import bcrypt from 'bcryptjs';

const userSchema = new Schema({

name: {

type: String,

required: [true, 'Name is required'],

trim: true,

minlength: [2, 'Name must be at least 2 characters'],

maxlength: [60, 'Name cannot exceed 60 characters'],

},

email: {

type: String,

required: [true, 'Email is required'],

unique: true,

lowercase: true,

trim: true,

match: [/^\S+@\S+\.\S+$/, 'Please enter a valid email'],

},

password: {

type: String,

required: [true, 'Password is required'],

minlength: [8, 'Password must be at least 8 characters'],

select: false,

},

phone: {

type: String,

match: [/^[6-9]\d{9}$/, 'Enter a valid 10-digit mobile number'],

},

address: {

street: { type: String, trim: true },

city: { type: String, trim: true },

pincode: { type: String, match: [/^\d{6}$/, 'Invalid pincode'] },

},

role: {

type: String,

enum: ['customer', 'rider', 'admin'],

default: 'customer',

},

isActive: { type: Boolean, default: true },

}, {

timestamps: true,

toJSON: { virtuals: true },

toObject: { virtuals: true },

});

// indexes

userSchema.index({ role: 1, isActive: 1 });

userSchema.index({ 'address.city': 1 });

// virtual

userSchema.virtual('displayAddress').get(function() {

if (!this.address?.city) return 'No address saved';

return `\({this.address.street}, \){this.address.city} - ${this.address.pincode}`;

});

// hooks

userSchema.pre('save', async function() {

if (!this.isModified('password')) return;

this.password = await bcrypt.hash(this.password, 12);

});

userSchema.pre('findOneAndUpdate', function() {

this.setOptions({ runValidators: true });

});

// instance method

userSchema.methods.comparePassword = async function(candidate) {

return bcrypt.compare(candidate, this.password);

};

export const User = model('User', userSchema);

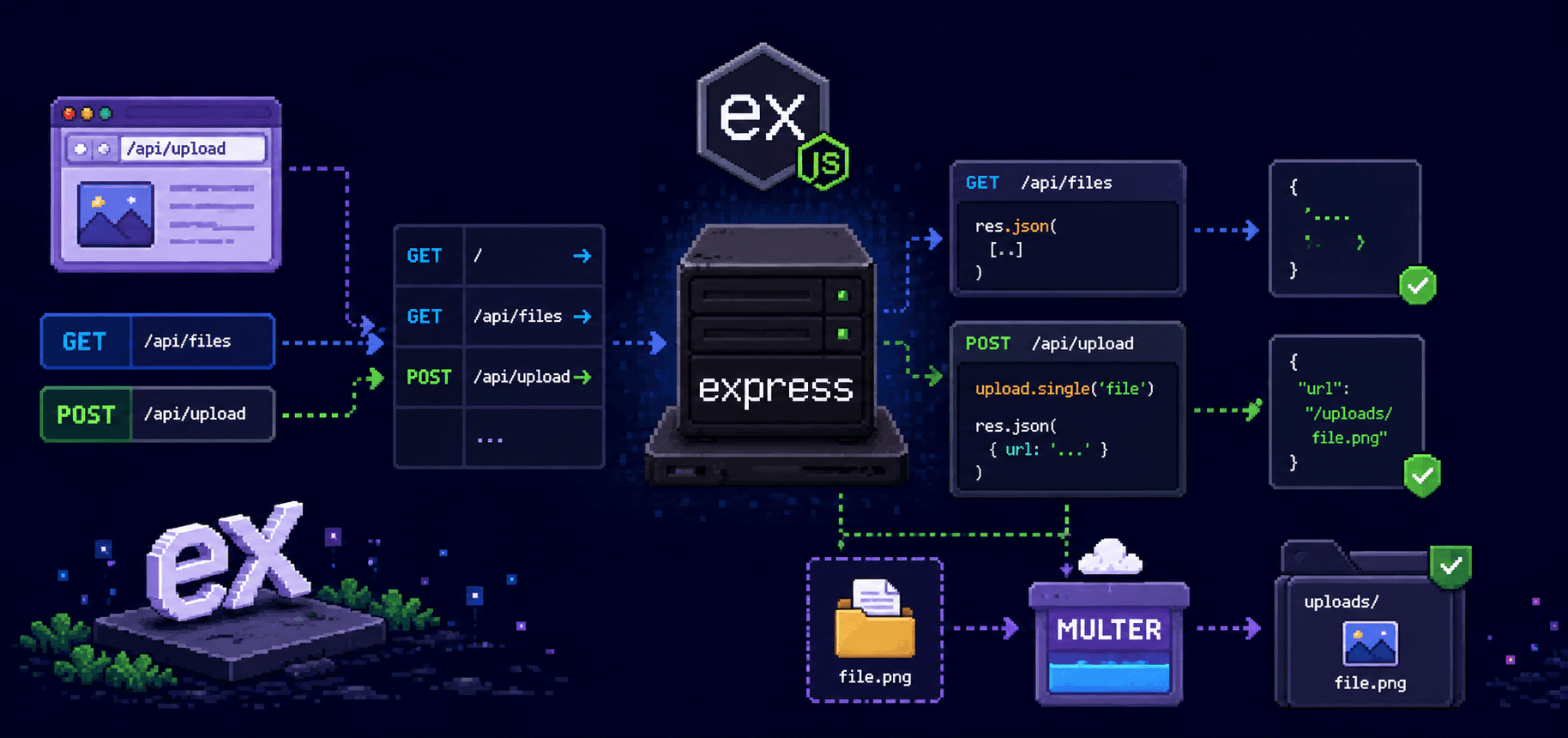

How it all fits together

It helps to see the full picture in one place. Your application code talks to Mongoose. Mongoose talks to MongoDB. The schema sits in the middle describing what is allowed, what gets validated, what runs automatically, and how queries behave.

Your route calls a method on the model. The model checks the schema constraints. Any matching pre-hooks run. The data goes to MongoDB. Post-hooks run. The result comes back as a typed Mongoose document with your virtual fields and methods attached.

MongoDB is a genuinely good place to start. The document model matches how JavaScript developers already think about data, the queries are readable, and you can get something working quickly without a lot of infrastructure. Mongoose adds the structure that MongoDB lacks on its own, which is the right tradeoff for most Node.js projects.