Under the Hood: How Node.js Actually Runs Your Code

Unmasking the V8 Engine and libuv in Node.js

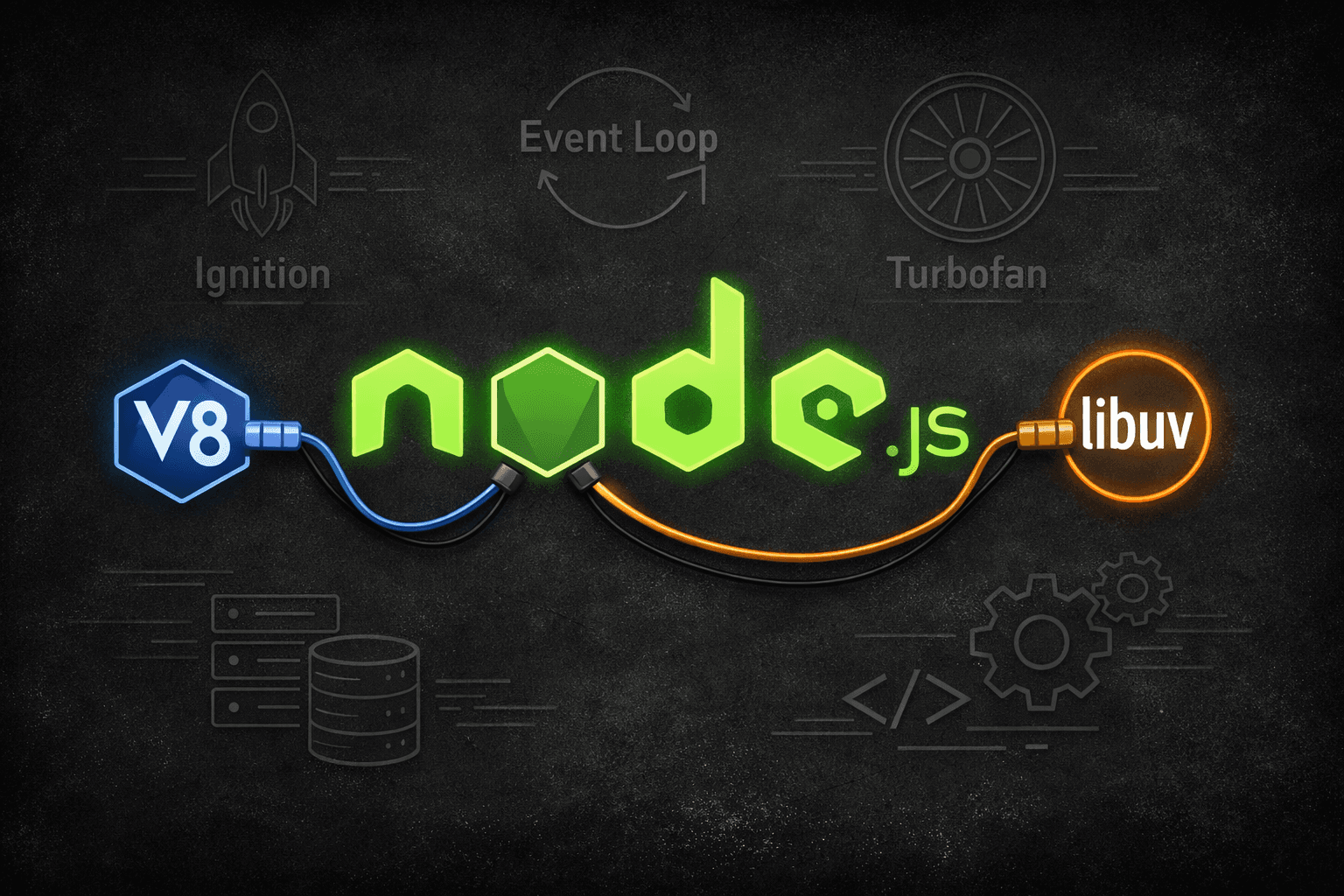

There is a whole world that exists between your JavaScript code and the machine that executes it. When you first started using Node.js, you heard terms like non-blocking code and event loop. We accepted these terms without digging deeper until the code you wrote behaved differently from your expectations, whether the process was slow or the sequence of operations was different. The explanation for these behaviors lies in two pieces of software: V8 and libuv.

Node.js is, in reality, a collection of different software components where V8 and libuv are primary to operations:

V8 Engine: This is the core engine that parses and executes your JavaScript, transforming it into machine code that the processor can understand.

libuv: This C++ library is responsible for handling the file system, networking, timers, and the event loop, enabling Node to perform asynchronous, non-blocking tasks.

V8: The JavaScript Engine

The JavaScript engine was built by Google for Chrome. It is responsible for the execution of JavaScript code and executes it as fast as possible. When Ryan Dahl created Node.js, it was embedded with V8 to make JavaScript run outside the browser.

The journey of the code in a V8 engine would roughly look like this:

The Parser

The first job of V8 is to parse the code, that is, it would read through the code and build an Abstract Syntax Tree (AST). AST is a structured representation of the code. Consider a line of code: const x = 5 + 3 where it forms a tree of nodes of variable declaration, containing a binary expression, containing two number literals.

Parser's job is to throw SyntaxError even before the code is run. It looks for basic mistakes in your line of code.

const x = ; // expected expected;

function () {} // identifier expected

const return = 5; // 'return' is not allowed as a variable declaration name.

How to see AST on your machine

You can see AST through Esprima, which you can install via npm install esprima

// create a js file ast.js

const esprima = require("esprima");

const code = `const x = 5 + 3`;

const ast = esprima.parseScript(code);

console.log(JSON.stringify(ast, null, 2));

// run node ast.js

// AST tree

{

"type": "Program",

"body": [

{

"type": "VariableDeclaration",

"declarations": [

{

"type": "VariableDeclarator",

"id": {

"type": "Identifier",

"name": "x"

},

"init": {

"type": "BinaryExpression",

"operator": "+",

"left": {

"type": "Literal",

"value": 5,

"raw": "5"

},

"right": {

"type": "Literal",

"value": 3,

"raw": "3"

}

}

}

],

"kind": "const"

}

],

"sourceType": "script"

}

Ignition: The Interpreter

V8 hands over the AST to a component called Ignition, which compiles the AST into bytecode. Bytecode is not machine code, but it is instructions that the CPU understands and is executed by Ignition.

To see how it works:

Either create a JS file with code const x = 5 + 3 and run a command node --print-bytecode test.js in your terminal, or directly run node --print-bytecode --print-bytecode-filter="" --eval "const x = 5 + 3" .

You will notice an overwhelming response in your terminal, but in simple terms, what it does is:

// this may vary depending on V8 engine

LdaSmi [5] // Load Small Integer 5 into the accumulator

Star r0 // Store accumulator into register r0

LdaSmi [3] // Load Small Integer 3 into the accumulator

Star r1 // Store accumulator into register r1

Ldar r0 // Load r0 back into the accumulator

Add r1 // Add r1 to the accumulator (5 + 3 = 8)

Star r2 // Store result (8) into register r2, this is x

The pipeline looks like:

TurboFan: The Optimising Compiler

V8 is pretty efficient in running the code. Ignition watches for the part of the code that executes often. A function, when called multiple times in a loop, is called hot code, which is sent to the second compiler called TurboFan. Its role is to analyse bytecode and compile it down to an optimised machine code. Now the functions that are frequently being called upon will be processed faster.

A simple function which V8 will optimise:

function add(a, b) {

return a + b;

}

// Call it many times. V8 notices this is "hot"

let total = 0;

for (let i = 0; i < 1_000_000; i++) {

total = add(total, i); // Always numbers

}

console.log(total);

V8 also handles memory management through a process called garbage collection. When you create objects and variables, V8 allocates memory for them. When the code stops looking for the variables, V8 frees the memory space allocated to them. This is a periodic operation that might affect performance in heavy applications.

libuv: The Asynchronous Bridge

V8 can execute JavaScript code, but is unable to communicate with a file system, make network requests, or set timers without halting the ongoing process. For asynchronous operations, a communication channel needs to be established with the operating system, where a request can take an unpredictable amount of time. But the process cannot stay in an idle state.

The solution to this problem was libuv. It is a C library designed for Node.js that can handle the I/O operations without blocking the rest of your program.

The Thread Pool

It is common knowledge that Node.js is single-threaded, but libuv maintains worker threads in the background. By default, it provides us with four threads, which can be later configured using an environment variable: UV_THREADPOOL_SIZE .

// Run Node with 8 libuv threads instead of the default 4

UV_THREADPOOL_SIZE=8 node server.js

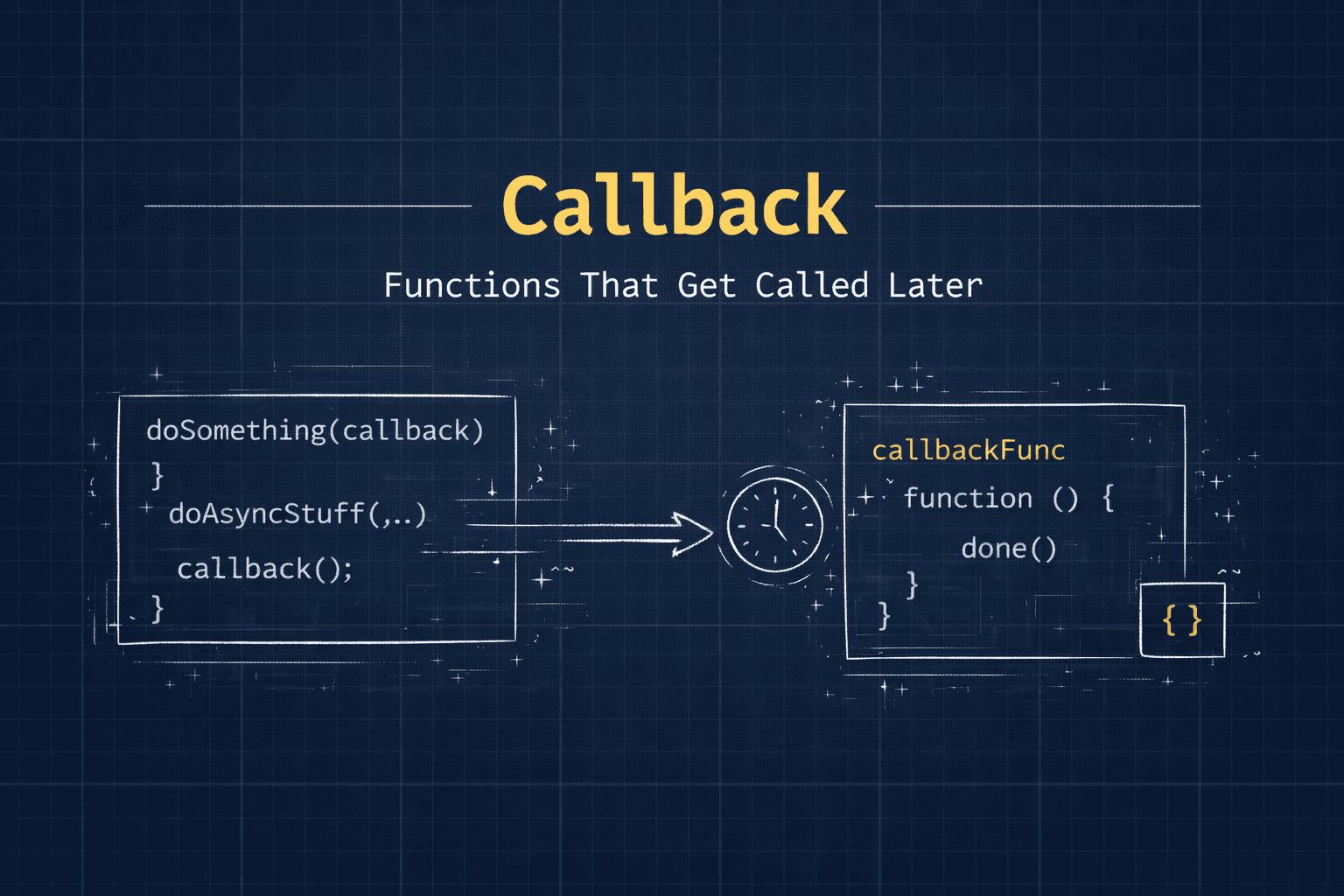

When fs.readFile() is called, JavaScript registers the callback, and the task of reading the file is handed over to the background threads. The thread is responsible for the blocking work, and when the task is completed, the result is put in the queue. The event loop will then pick it up and call the callback. This is how JavaScript became non-blocking.

const fs = require('fs');

console.log('1. Script starts');

// This goes to libuv's thread pool. It does NOT block here.

fs.readFile('./data.txt', 'utf8', (err, data) => {

console.log('3. File read finished (came back from libuv)');

});

// This runs IMMEDIATELY after registering the file read

console.log('2. Still on the main thread - not blocked');

// output

// 1. Script starts 2. Still on the main thread - not blocked 3. File read finished (came back from libuv)

In the above code, line 2 prints before line 3, even though line 3 is written above it in the callback. The file reading was happening in the background the entire time line 2 was executing. This ordering is the direct result of how libuv works.

The Event Loop

The event loop is the bridge between V8 and libuv. As the name says, it is a loop that runs continuously to check for a pending task, and if there are any, it completes them.

Every iteration of the loop is called a tick. In every tick, the event loop follows an order of operations. libuv's role is to implement and drive the loop and call V8 whenever there is JavaScript code to execute.

The phase that is mostly put to use is the Poll Phase, where the Node waits for its I/O events. If there is nothing ready, it can block here momentarily, waiting for the OS to signal that a file read is completing, or a network packet is arriving. When work arrives from libuv's thread pool, it lands here.

The Full Picture: V8 + libuv

Your JavaScript code sits at the top. The Node.js APIs like fs or http are the interface Node exposes to you. Below that, V8 handles all the JavaScript execution while libuv handles the asynchronous operations involving the OS. Everything eventually reaches the OS at the bottom.

Let us trace exactly what happens when you run this familiar piece of code, step by step.

const fs = require('fs');

fs.readFile('file.json', 'utf8', function(err, data) {

if (err) throw err;

const users = JSON.parse(data);

console.log(`Found ${users.length} users`);

});

console.log('Server is ready to accept requests');

The order of operations:

V8 parses and starts executing your script

fs.readFileis called, and Node.js hands this to libuvlibuv assigns the file read to one of its 4 background threads

V8 continues executing.

console.log('Server is ready...')runs immediatelyThe background thread finishes reading

file.jsonfrom disklibuv puts your callback into the poll queue

The event loop picks it up in the poll phase

V8 executes your callback with the file contents

Notice that in step 4, the main thread did not halt its operation, waiting for the OS to finish reading the JSON file, showing that the task of waiting is handled by libuv.

Timers and setImmediate

Timers are also handled by libuv. Whenever a setTimeout function is called, libuv registers it. When the timer runs out, it places the callback in the queue, and this queue will be checked by the event loop on every tick to run the callbacks that are ready to be served.

setImmediate is a function specific to Node that schedules a callback for the Check Phase. The check phase comes after the Poll phase, which makes the setImmediate run after the I/O operations in the current tick, but before the timers in the next iteration.

Let's understand the execution order through:

console.log('A - synchronous, runs first');

setTimeout(() => {

console.log('D - setTimeout fires in Timers phase');

}, 0);

setImmediate(() => {

console.log('E - setImmediate fires in Check phase');

});

Promise.resolve().then(() => {

console.log('C - Promise microtask runs before next phase');

});

console.log('B - still synchronous');

// output

// A - synchronous, runs first

// B - still synchronous

// C - Promise microtask runs before next phase

// D - setTimeout fires in Timers phase

// E - setImmediate fires in Check phase

C (Promise) runs before D and E (setTimeout and setImmediate), even though it comes after them. This is because Promise use microtask queue, which the event loop prioritizes to clean after every phase. Microtasks always get priority over regular callbacks.

V8 and libuv Task on Different Operations

| Operation | V8 performs | libuv performs |

|---|---|---|

| const x = 5 + 3 | Compiles and executes it | Nothing |

| fs.readFile() | Calls the Node binding | Sends to the thread pool, queues the callback on completion |

| setTimeout(fn, 500) | Registers callback reference | Tracks the timer, queues callback after 500ms |

| http.get(url, cb) | Calls the binding | Uses OS async networking (epoll/kqueue), queues callback |

| new Promise(fn) | Manages microtask queue | Nothing (purely JS) |

| crypto.pbkdf2() | Calls the binding | Sends CPU-heavy work to the thread pool |

Node.js is a combination of two software: V8 and libuv that work together through the event loop. V8 executes the JavaScript code on the main thread, whereas libuv is responsible for the asynchronous task, helping the Node to stay non-blocking.

The event loop is like a conductor, which, on every tick, works through its phases to run the callback ready to be served through V8. It is to be noted that the main thread should not be blocked with heavy synchronous tasks so that the system is able to handle all operations efficiently. Understanding the architecture helps to understand the sequence of operations and ways to handle heavy tasks.

REFERENCES: